Viva Connections: a centralized hub for communication

Create a curated workplace experience that simplifies a digital workplace. Viva Connections is a centralized solution within the Microsoft 365 ecosystem.

There exists a lot of reasons why organizations are forced to migrate their data. Data migration can be performed manually or by using special tools. The process becomes more challenging if the migration is to be performed for a complex application with huge amount of data. Data Accuracy is a key aspect that should be verified when loading data from one source to a target system. How do you verify data accuracy, completeness of data (if all data is moved/loaded) and if it matches all the rules? These questions could arise if you are verifying data during or after migration.

In Data Validation testing, one of the fundamental testing principles is at work: ‘Early Testing’. The faster a QA Engineer starts analyzing requirements, business rules, data analysis, creating test scripts and TCs, the faster the issues can be revealed and removed. This will also lead to a decrease in overall costs.

Let’s define the objectives of data migration testing:

→ Migrating data is not simply transferring information from one storage to another. It is the complex work of a QA team and it requires skill, expertise, tools, and resources.

So, it’s time to go through the customary steps that should be taken during Data Validation testing within the migration process:

Test Planning is the most important activity in any test project. This step presupposes the following actions:

During Test Analysis activity, QA will create TCs with emphasis on how each item of data is mapped and migrated from source to target. It will also include a design of how the migration is validated through testing and define non-functional aspects to test.

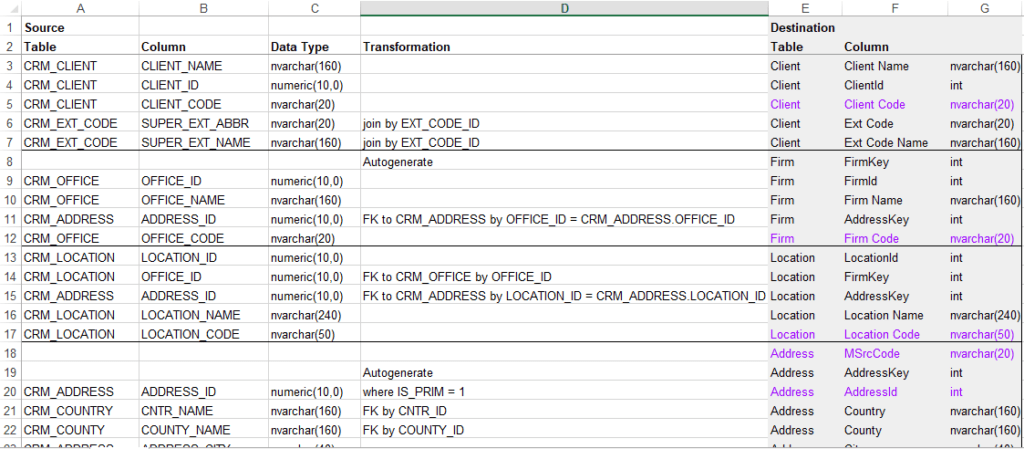

Review documentation and check the mapping of each data type in the legacy system and the new system. Make a note that during the migration, based on the transformation rules, some columns may be combined into one column; some column names, tables may change names or tables.

Then, according to the documentation, check the DB scheme to ensure that it includes mandatory fields, field names, field types, data types, etc., for both, the original data source and the destination system and find any mistakes or error areas and correct as much as possible.

A good mapping document is supposed to represent the following data from both source and target fields:

Take into account if any fields that are required in the new application are not required in the old one and make sure that the value of these fields are not equal to NULL.

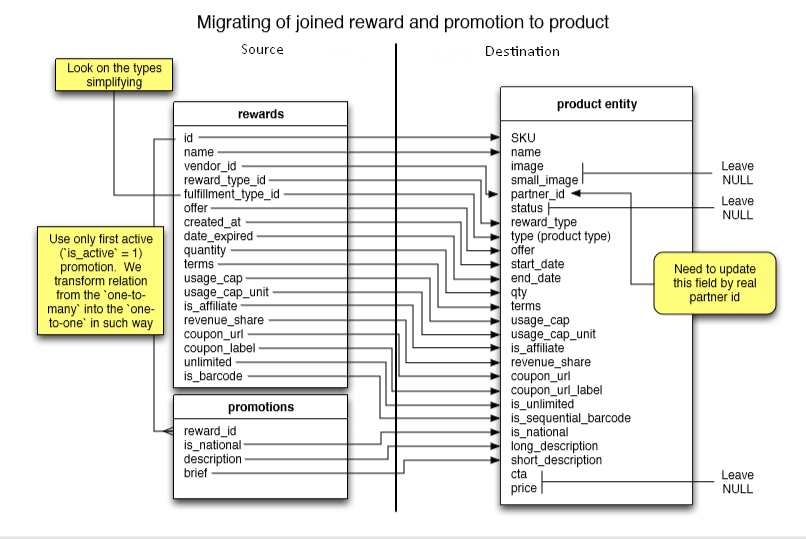

Mapping is a useful instrument for data loss prevention in case if you need to deploy tables or columns that have been renamed. It (mapping with business rules) can be represented in different ways, for example: in a simple table (excel) or in a graphical view.

Fig 1. Mapping table example

Fig 1. Mapping table example

Fig2. Mapping in graphical view example

Fig2. Mapping in graphical view example

Data Completeness includes :

Record count verification.

Record count checks consist of 2 scenarios:

In order to get a number of a record in a target or source table we can use a simple query

SELECT COUNT (*)

FROM table (changed)Data Quality ensures that the data is correctly loaded into the destination tables/fields, and also that the application rejects, substitutes default values, alters, ignores, and reports invalid data correctly .

Comparing source and target data sets. For example, in order to compare data in source DB with destination DB two types of queries can be created:

SELECT

t.name,

v.vendor_name,

p.description,

p.brief

FROM rewards r

INNER JOIN promotions p ON r.id = p.reward_id

INNER JOIN vendor v ON r.vendor_id = v.vendor_idSELECT

p.name,

v.partner_name,

p.long_description,

p.short_descryption

FROM product_entity p

INNER JOIN vendor v ON p.partner_id = v.partner_idVerification Data validation. It includes the following:

SELECT column1, COUNT (column2)

FROM table

GROUP BY column1

HAVING COUNT (column2) > 1Verification of the deleted records. Based on the requirements, some data can be deleted.

Verification of Distinct Values. For example, a requirements document specified that a column in the target table should have distinct columns. Then, the next query can be used:

SELECT DISTINCT <Column Name> FROM <Table Name>.

Verification of many source rows into one target row. A Requirements Document can specify that some rows should be combined into one. An example of the query:

SELECT s.srcCode, s. srcName, t. Name

FROM (SELECT Code, CONCAT(col1, ‘-’, col2) as Name FROM src_table) s

INNER JOIN (SELECT Code, Name FROM dest_table) t ON s.Code = t.Code

WHERE s.Name <> t.NameInspection of correct handling of invalid data types in the fields.

Inspection of the correct handling of the NULL values.

→ Learn about data validation testing techniques and approaches to help you ensure the data you deal with is correct and complete.

Ones migration has been performed, QA should start running TCs that are created during the design phase and compare results. How do you compare huge data files? There are a lot of free or commercial tools for that. Data can be compared in SQL Data Compare or Red Gate. Also, the exported files can be compared with ‘Total Commander’ or Excel.

Data validation will make sure that data migration was done, meeting all business rules and mapping, but it does not necessarily mean that in the destination environment everything works well. So, the next steps are really important. That is, we should perform:

This phase presupposes checking the following:

You know that testing is a live procedure. So any professional might come across the whole range of issues. Here, I’ll touch upon the most common ones. Those include:

As it’s more and more about data and its quality nowadays, businesses need to drive maximum value utilizing it. Migration of data is not just the process of physically moving data from one storage to another. When data is extracted from one source, transformed, and finally loaded into the destination place, it is the best time to check its quality. Data migration testing is a viable instrument which capacitates organizations get the best insights from their data, but it requires specialized skills, expertise, tools, and resources.

→ Explore Avenga’s quality management across various domains

Ready to innovate your business?

We are! Let’s kick-off our journey to success!